Build a

Self-Improving Agent

One OpenAI-compatible API to route, trace, and evaluate every AI request — across every major provider. Ship smarter, pay less.

Free forever · No credit card · Cloud or self-host

Works with every major provider

Everything you need to

ship AI at scale

Route, trace, evaluate, and distill — one platform, every provider, zero vendor lock-in.

One endpoint. Every LLM provider.

One OpenAI-compatible API that routes to every major provider. Swap providers in one line. Automatic fallbacks keep you online when things go sideways.

import opentracy as ot # Call any model — one line response = ot.completion( model="openai/gpt-4o-mini", messages=[{"role": "user", "content": "Hello!"}], fallbacks=["anthropic/claude-3"] ) print(response.choices[0].message.content) print(f"Cost: ${response._cost:.6f}")

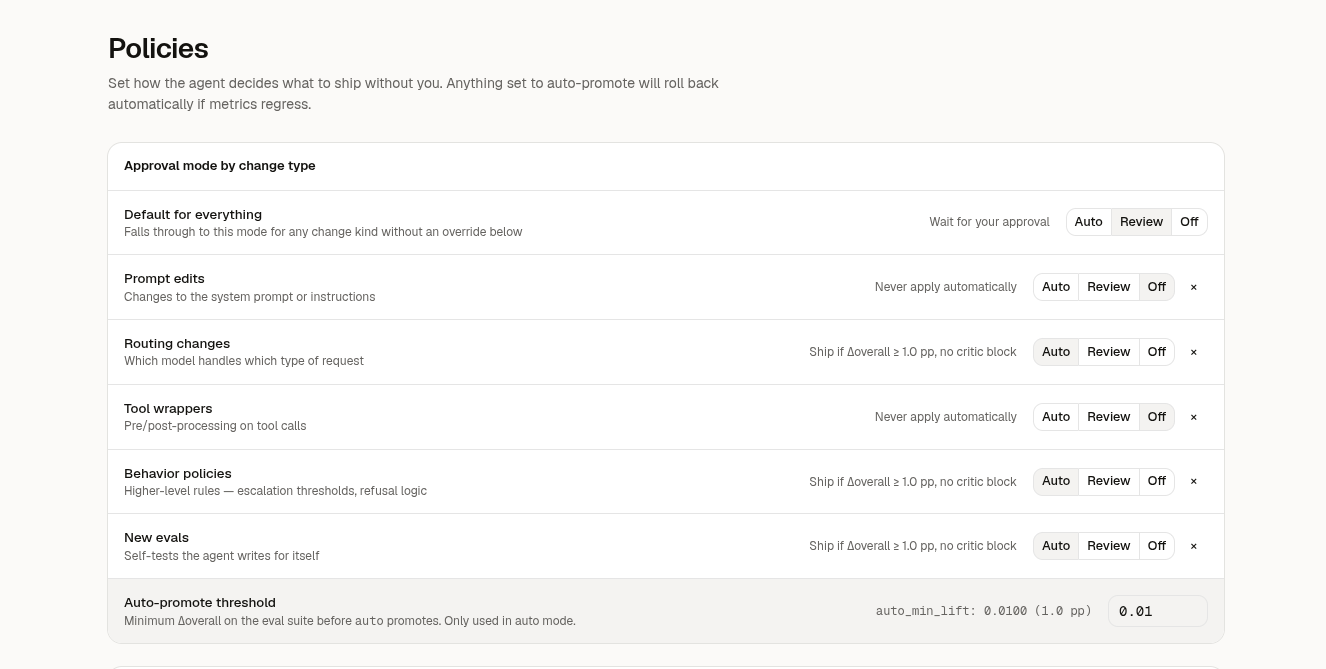

Route smarter. Pay less.

Automatically send simple prompts to fast, cheap models and route complex reasoning to the most capable one - across any provider, no code changes.

Know where every dollar goes.

Per-token pricing on 300+ models, broken down by model, user, or feature. Set budget alerts and hard caps - no more end-of-month surprises.

Complete visibility into every request.

Every request logged with full input, output, cost, latency, and model metadata. AI-powered scanning detects hallucinations before your users do.

Explore live traces

Train your own model.

Turn production traces into fine-tuning datasets automatically. Get frontier-model quality from a model you own - at a fraction of the cost.

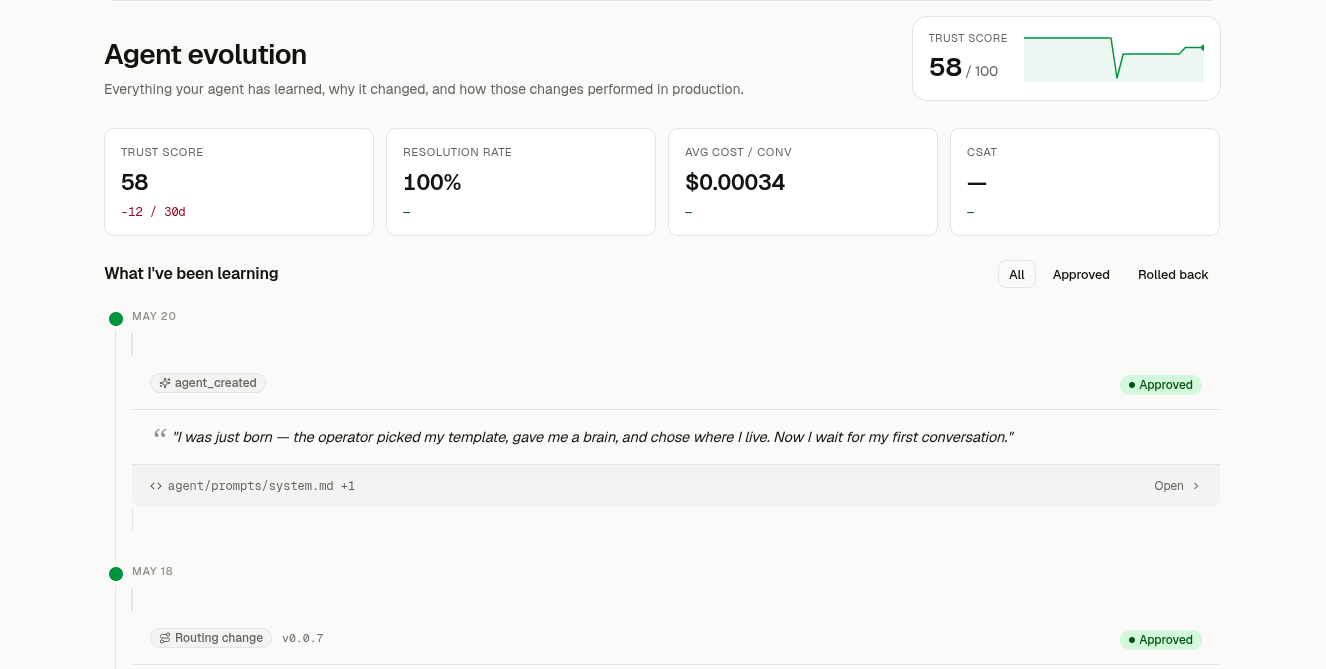

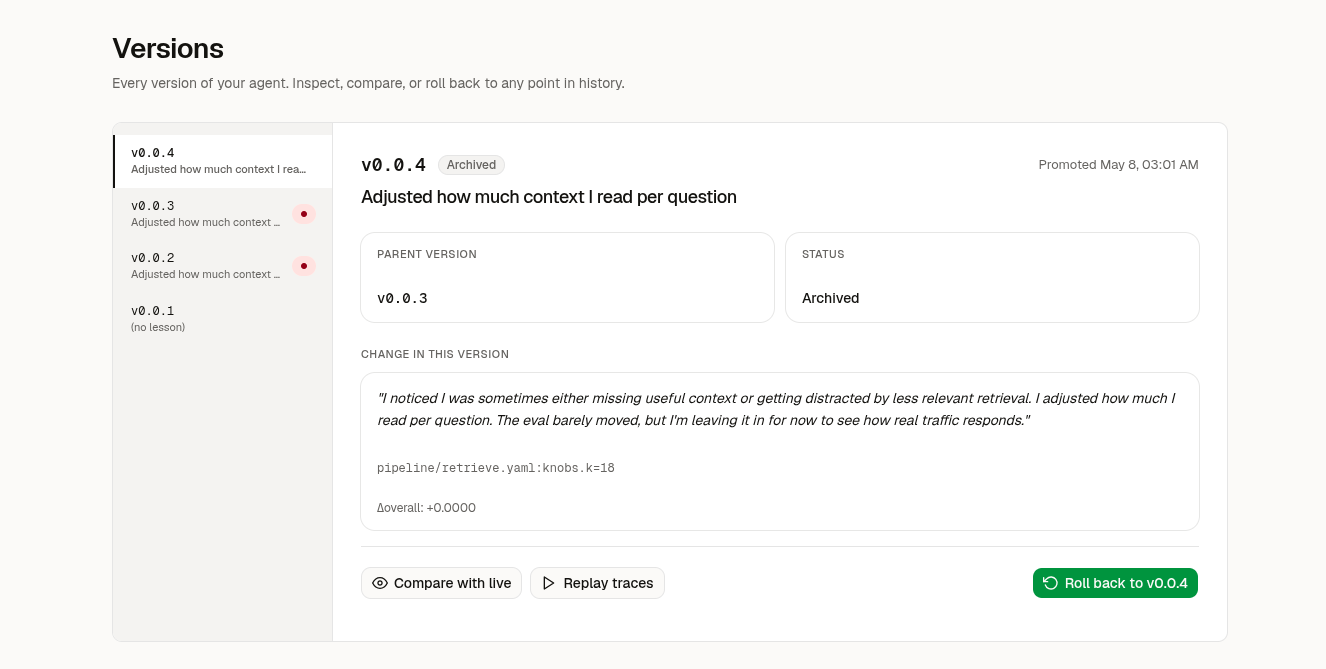

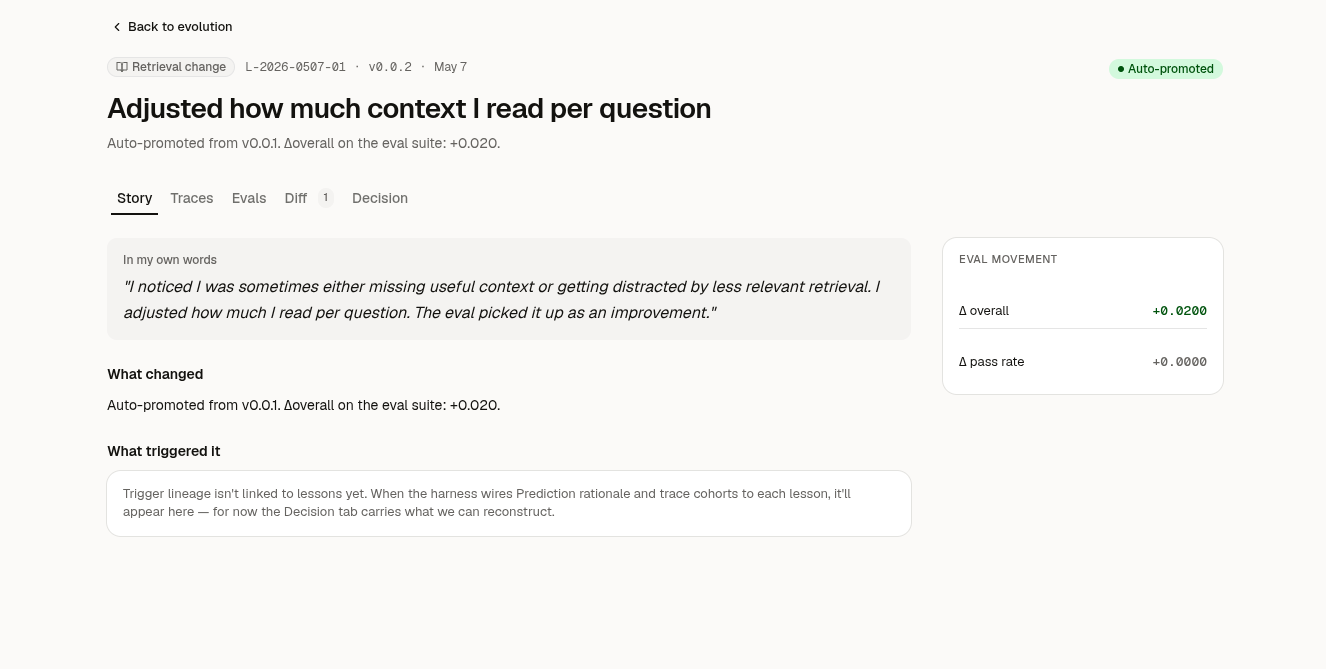

Catch drops before users do.

Continuous evaluations on production traffic detect regressions and hallucinations automatically. Set thresholds, get alerts, stay confident.

Simple. Transparent.

Start free. Scale when you're ready. No hidden fees, ever.

Perfect for side projects and experimentation.

- 10+ providers

- 10k requests/month

- Basic analytics

- Community support

For teams shipping real products with real users.

- Everything in Free

- 500k requests/month

- Smart routing

- Cost tracking & alerts

- Priority support

For large-scale AI deployments with custom needs.

- Unlimited requests

- Model distillation

- SSO & SAML

- SLA & dedicated support

- On-prem option

Frequently asked questions

Answers to the most common pricing and deployment questions.

What counts as a request?

Each API call through OpenTracy counts as one request. Both successful and failed calls are counted.

Can I self-host OpenTracy?

Yes. OpenTracy is open source (MIT). You can self-host the full stack with Docker. Starter and Enterprise plans add managed features on top.

Which providers are supported?

OpenAI, Anthropic, Google Gemini, Mistral, Groq, AWS Bedrock, Azure OpenAI, Cohere, DeepSeek, Together AI, Fireworks, Ollama, and OpenRouter.

How does the free trial work?

14 days of Starter features, no credit card required. After the trial, you move to the Free plan automatically.

Is my data secure?

Yes. SOC 2 Type II certified, GDPR compliant. Enterprise plans include VPC deployment and BYOK encryption.

Can I switch plans anytime?

Yes. Upgrade or downgrade at any time. Changes take effect on your next billing cycle.

Community

Open source, open development. Build with us.

Open source. Self-host or cloud.

Run on your own infrastructure with full control, or use our managed cloud. MIT licensed, no vendor lock-in.

Free tier available. No credit card required.